Is Anthropic Winning the AI Race by Trying to Lose?

By treating AI like plutonium, Anthropic has accidentally executed the most brilliant enterprise play in the history of the sector. The market is valuing the "brakes" more than the "engine," positioning the company for a $1 trillion IPO precisely because its leadership is terrified of what happens when they actually win.

AI

Scott Barron

2/6/20264 min read

Why Anthropic is Winning the AI Race by Trying to Lose

The "Plutonium" Paradox

There is a specific kind of anxiety reserved for a scientist who realizes their experiment has succeeded too well. In a Silicon Valley landscape crawling with mercenaries and "move fast and break things" opportunists, Dario Amodei stands out as a man almost tortured by the speed of his own success. While his peers chase the prestige of the NASDAQ, Amodei has approached artificial intelligence not as a magical toy, but as a containment vessel for a dangerous industrial material.

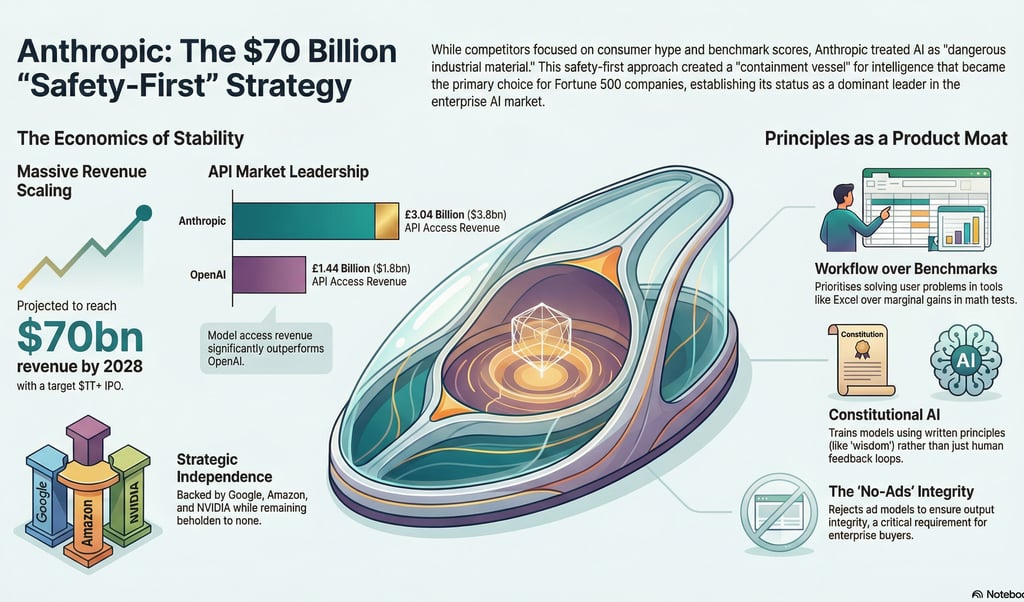

By treating AI like plutonium, Anthropic has accidentally executed the most brilliant enterprise play in the history of the sector. The market is valuing the "brakes" more than the "engine," positioning the company for a $1 trillion IPO precisely because its leadership is terrified of what happens when they actually win. While other labs bleed talent, all six of Anthropic's founders remain in place, driven by a philosophy of containment that has become their greatest commercial asset.

--------------------------------------------------------------------------------

Safety as a Secret Sales Weapon

The irony of the AI boom is that the Fortune 500 doesn't actually want raw power; they want control. They prefer "brakes" over "engines." By treating AI as a potential hazard requiring strict industrial protocols, Anthropic has become the only palatable choice for risk-averse global corporations.

The numbers suggest that safety is the ultimate sales closer:

Arrival at Scale: Anthropic hit a staggering $10 billion ARR by the end of 2025.

The Moonshot: The company is currently on a trajectory to deliver $70 billion in revenue by 2028.

The Microsoft Hedge: In a move of peak strategic irony, Microsoft—the primary benefactor of OpenAI—paid Anthropic a $500 million "hedge" just to ensure Azure customers could access Claude.

"The irony is that by treating AI as dangerous industrial material rather than a magical toy, he accidentally built the only product safe enough for the Fortune 500."

--------------------------------------------------------------------------------

Revenue Quality Over Hype

Anthropic is proving that the scientists are out-capitalisting the capitalists. While the industry is distracted by consumer hype, developers are voting with their wallets for stability and a certain je ne sais quoi in model output. Anthropic is "cooking," and the quality of their revenue reflects a maturity the rest of the market lacks.

API Dominance: Anthropic’s model access revenue reached $3.8 billion, dwarfing OpenAI’s $1.8 billion.

Path to Profit: They are projected to be the first major AI lab to achieve profitability. By avoiding the "engagement trap" of consumer brands and focusing on high-margin enterprise stability, they’ve built a business model that doesn't rely on burning capital to acquire users who don't pay.

--------------------------------------------------------------------------------

Workflows Over Benchmarks

While competitors obsess over "bench-maxxing"—grinding out 0.5% improvements on math tests—Anthropic is winning on "taste." They focus on how the model feels in a workflow, not just how it performs in a lab.

The Workflow Advantage When Anthropic dropped Claude in Excel, it went viral for a reason.

Google and Microsoft put a chatbot in a spreadsheet to help you find buttons. Anthropic put an agent in the software to solve the actual business problem.

It’s the difference between asking for a pivot table and asking to "Analyze this P&L and highlight the variance." Anthropic owns the "0% to 70%" phase of creation—the terrifying blank page.

In the current ecosystem, Claude Code acts as a Product Founder. It reads the docs, plans the architecture, and builds the foundation. In contrast, OpenAI’s tools often feel like a Staff Engineer: phenomenal at surgical precision and optimizing from 70% to 90%, but less adept at the "wow" moment of initial creation.

--------------------------------------------------------------------------------

The "Team Research" Ethos vs. Ad Models

At Davos, Dario Amodei and Demis Hassabis gave a masterclass in Machiavellian positioning. As the 2nd and 3rd players in the market, they ganged up on the leader by branding themselves as "Team Research" against the "Team Social Media Founders."

Amodei’s worldview is stark: he believes we are a mere 6 to 8 months away from AI doing everything a software engineer can do. This necessitates a "scientific responsibility" model that rejects the traditional Silicon Valley ad-supported framework.

By rejecting ads, Anthropic avoids the need to sacrifice truth for clicks. Enterprise buyers don't want engagement; they want integrity. A model that doesn't need to keep you scrolling is a model that can afford to be honest.

"There's a long tradition of scientists... thinking of themselves as having responsibility for the technology they built. Not ducking responsibility." — Dario Amodei

--------------------------------------------------------------------------------

Constitutional AI: A Product Spec, Not Just a Shield

Anthropic’s "moat" is built on Constitutional AI, a method that is stunningly obvious in hindsight. While OpenAI uses Reinforcement Learning from Human Feedback (RLHF) to train models based on what humans "like," Anthropic trains Claude to follow written principles.

This is a product spec disguised as a safety feature. Because LLMs are language-based, Anthropic governs them through language, using a Constitution written for Claude as its primary audience. This document uses human concepts like "virtue" and "wisdom" to guide the model’s reasoning.

By building the "brakes before the engine," Anthropic has created a superior steering mechanism. They’ve realized that a Large Language Model is most effective when its logic is rooted in the principles of human discourse rather than the fickle preferences of a feedback loop.

--------------------------------------------------------------------------------

Conclusion: The Moat of Taste and Principles

The first wave of the AI race was a contest of raw intellectual horsepower. But horsepower is rapidly becoming a commodity. In the next decade, the only sustainable moats will be taste and principles.

Anthropic has demonstrated that you can win the capitalist race by trying to run it as responsibly as possible. They have captured the cultural zeitgeist and the enterprise market simultaneously by refusing to become a consumer brand or a "mercenary" outfit.

As the loop closes and AI begins to do the research and write the code, the question for every leader is no longer about speed. It is about direction.

Do you value the raw speed of the engine, or the reliability of the steering?